Quantum at PLSE

Post Metadata

You might not know it, but there is a small group at PLSE working on quantum computing. This post is an introduction to what we have been up to and why we think PL ideas have a lot to offer here.

We have been looking at two main problems.

The first is about abstraction. Today, most quantum languages ask you to program in terms of circuits: sequences of low-level unitary operations applied to individual qubits. It is the quantum equivalent of writing a sorting algorithm by wiring together logic gates over raw bits. You could do it, and maybe it’s how it used to be done, but nobody programs that way in classical computing anymore. We build abstractions instead. Quantum programming needs the same shift.

The second is about the hybrid nature of quantum computation. A quantum computer is not a single processor; it is two very different processors, a classical CPU and a quantum processing unit, that must work together. Even simple quantum algorithms require classical decisions mid-execution: teleportation applies corrections based on measurement outcomes, and repeat-until-success protocols loop until a measurement succeeds. The program is not a flat list of quantum instructions — it is a conversation between the two processors. Fault-tolerant quantum computing, which requires layers of error correction with real-time classical feedback, makes this conversation dramatically harder to manage and to compile. Most existing frameworks either ignore this split or force developers to manage it by hand at every stage.

Both of these are programming languages problems. This post introduces two projects, one for each: Aleph, a DSL that raises the abstraction level so you can write quantum programs using familiar operations on integers; and qstack, a compiler framework built around the idea that the quantum-classical boundary should be a first-class part of the design.

Aleph: using quantum ideas without writing quantum code

Quantum programming today means thinking in terms of amplitudes, superposition, and carefully structured circuits. Aleph is a domain-specific language that sidesteps all of that: instead of manipulating qubits, you write arithmetic and boolean operations over integers, and the system maps them onto quantum hardware for you. No Hilbert spaces, no unitary matrices, no quantum mechanics.

The programming model has four core concepts:

- A Ket is a variable that holds multiple values simultaneously. Unlike a classical variable — which holds a single integer — a Ket holds all integers from 0 to $2^w - 1$ at once, in a single quantum register. Think of it as a variable that hasn’t committed to a value yet.

- Arithmetic and boolean operations on Kets apply to all values at once, producing a new Ket of all possible outcomes. This is where quantum parallelism comes in: the hardware evaluates all combinations in a single step.

- where and when filter which values are valid. Under the hood, filtering uses Grover’s amplitude amplification — a quantum algorithm that gives a quadratic speedup over brute-force classical search.

sampleextracts a concrete result — one value drawn at random from the valid possibilities. In a physical quantum system you cannot inspect the full set directly; measurement is the only way to observe a concrete value, and that is whatsampledoes.

Here is a small example:

from aleph_lang import KetInt, sample

a = KetInt(width=3) # all integers 0..7

b = KetInt(width=3) # all integers 0..7

c = a + b # all pairwise sums, 64 combinations

print(sample(c)) # prints one sum: could be 0, 1, ..., or 14

a and b each hold every 3-bit integer simultaneously. c = a + b is not a single addition — it produces a new Ket whose values are all 64 possible pairwise sums, computed in one step. sample(c) returns one of those sums at random.

Things get more interesting when you add constraints. Here is a graph coloring problem: given a graph, assign each node a color such that no two adjacent nodes share one. In Aleph embedded in Python, this looks like:

from aleph_lang import KetInt, sample

def graph_coloring(nodes_count, edges, max_colors):

colors = [KetInt().where_less_than(max_colors) for _ in range(nodes_count)]

valid = colors[edges[0][0]] != colors[edges[0][1]]

for (i, j) in edges[1:]:

valid = valid & (colors[i] != colors[j])

return sample(colors, when=valid)

Each node gets a Ket representing its color. The constraint says adjacent nodes must differ. Compare this to a classical brute-force approach: you would enumerate every possible color assignment one by one, check whether it satisfies all the edge constraints, and stop when you find one that does. With many nodes and colors, that can be a lot of combinations to check.

Aleph does something different. Because each Ket holds all possible colors at once, the full space of assignments exists simultaneously. The when=valid constraint is compiled into a quantum circuit that checks all assignments in parallel. Then, through a process called amplitude amplification, the valid assignments are progressively reinforced — made more likely to appear — while the invalid ones are suppressed. When you finally sample, you are drawing from a distribution that has already been steered toward valid answers. This takes $O(\sqrt{N/t})$ steps rather than the $O(N/t)$ of classical search, a quadratic speedup that you get for free: write the constraint, and Aleph handles the rest.

Notice what is absent from this code: there are no qubits, no gates, no circuits, and no quantum mechanics. A developer who knows Python and understands the problem can write this program and have it target a quantum computer. Aleph compiles the high-level operations down to quantum circuits automatically.

qstack: making the quantum stack compositional

If Aleph is about making quantum programs easier to write, qstack is about what comes after: compiling and running them on real hardware.

A quantum program written against a high-level gate set must be translated to hardware-native instructions, just like a classical compiler lowers from an IR to machine code. But quantum adds a major complication: error correction.

Why error correction changes everything

Qubits are noisy. Current hardware cannot execute even moderately deep circuits without errors accumulating and destroying the result. The fix is quantum error correction (QEC): you encode each logical qubit into multiple physical qubits using a redundancy scheme. For example, a simple repetition code encodes one logical qubit into three physical qubits; the Steane code uses seven; the Shor code uses nine; other codes may use hundreds.

But encoding is only the beginning. During execution, you must repeatedly measure auxiliary qubits to detect whether an error has occurred (this is called syndrome extraction), decode those measurements classically to figure out what went wrong, and apply a correction, all in real time, while the computation is still running. What was a purely quantum program becomes a hybrid quantum-classical feedback loop: the quantum circuit pauses at each measurement, the CPU reads the results and decides what correction to apply, and then quantum execution continues — all in real time.

And the layers stack. You can concatenate codes (encode an already-encoded qubit a second time) for higher reliability. Each layer multiplies the number of physical qubits and adds its own syndrome measurements and decoding logic. A fault-tolerant program may have multiple layers of error correction, each with its own classical feedback, all interleaved with the algorithm’s own measurement-driven logic.

The compiler problem

This is where things break down in existing frameworks. Each compilation step (translating gates, inserting error correction) may change the measurement structure of the program. A callback that was written to pop one measurement bit and decide whether to apply a correction now finds three bits on the stack (or seven, or nine). The classical logic is wrong unless someone rewrites it for the new encoding. In frameworks like Qiskit, this adaptation is manual work, repeated at every layer and for every level of code concatenation.

qstack’s approach: opaque callbacks and automatic wrapping

qstack is built around a purely quantum intermediate representation. Classical logic never appears inside the IR; it lives entirely behind opaque callbacks: functions in the host language (C++, Rust, Python, or anything else) that the compiler never inspects or modifies. Because the IR imposes no classical language of its own, callbacks can be written in any language, use any libraries, and make decisions based on arbitrary runtime context such as hardware characteristics, decoder performance targets, or calibration data. The quantum compiler does not need to understand any of it.

A kernel in qstack follows a fixed cycle: allocate a qubit, apply instructions, measure, and invoke a callback with the result. Each measure names a callback to invoke when that qubit is measured; done is a special built-in callback that signals the kernel has finished and its qubit can be freed. Here is a teleportation program written in qstack. You do not need to know what quantum teleportation does — what matters is the structure: three qubits are allocated, some gates are applied, and measurements fire callbacks that decide what corrections to apply:

allocate q0:

allocate q1:

h q1

allocate q2:

cx q1 q2

cx q0 q1

h q0

measure done

measure fixup

measure done

And the classical callback, written in Python:

def fixup(mstack):

m1 = mstack.pop()

m0 = mstack.pop()

instructions = []

if m1 == 1:

instructions.append(X("q0"))

if m0 == 1:

instructions.append(Z("q0"))

if instructions:

return Kernel(instructions, done)

return None

The quantum part is a stack of nested kernels. The classical part (the fixup callback) pops two measurement bits and decides what correction to apply. The compiler sees only the quantum instructions and a callback reference; it never looks inside fixup.

How compilation works

A compiler pass identifies all kernels and replaces each instruction with its equivalent in the target. For a simple ISA translation (say, Cliffords to trapped-ion native gates), each gate maps to one or more target gates. For a QEC pass, a single logical gate can expand into an entire sub-kernel that operates on multiple physical qubits, allocating ancillae, applying physical gates, and performing syndrome measurements, all within the same IR.

The tricky part is the callbacks. When a QEC pass encodes one logical qubit into three physical qubits, measuring the logical qubit now produces three physical measurement bits instead of one. But fixup was written expecting the logical interface — two logical bits, one per qubit measured. It has no idea that each of those logical bits is now represented by three physical ones. If you run fixup directly against the physical output, it will pop the wrong number of bits and break.

qstack solves this by wrapping each callback in an adapter. Here is what happens to fixup after a bit-flip rep-3 pass:

- The wrapper pops the 3 physical measurement bits from the stack.

- It majority-votes them into 1 logical bit (2 out of 3 agree → that’s the answer).

- It pushes the logical bit back onto the stack.

- Now the stack looks exactly as

fixupexpects, sofixupruns unchanged.

There is a second part to wrapping. fixup can return a new kernel — for example, a kernel that applies an X correction. That kernel is written in the source gate set (before compilation). So the wrapper also compiles any returned kernel through the same pass before handing it back for execution. This is what makes dynamic quantum-classical interaction work correctly across compilation stages. Note how unusual this is: most compilers assume the program is fully known at compile time. Here, a callback can emit new quantum instructions at runtime — and those instructions must themselves be compiled on the fly, through every pass in the pipeline, before they can execute.

The framework provides all the wrapping boilerplate. A concrete compiler pass only needs to implement two things: gate handlers (how to translate each gate) and a decode method (how to recover logical bits from physical ones).

Composition

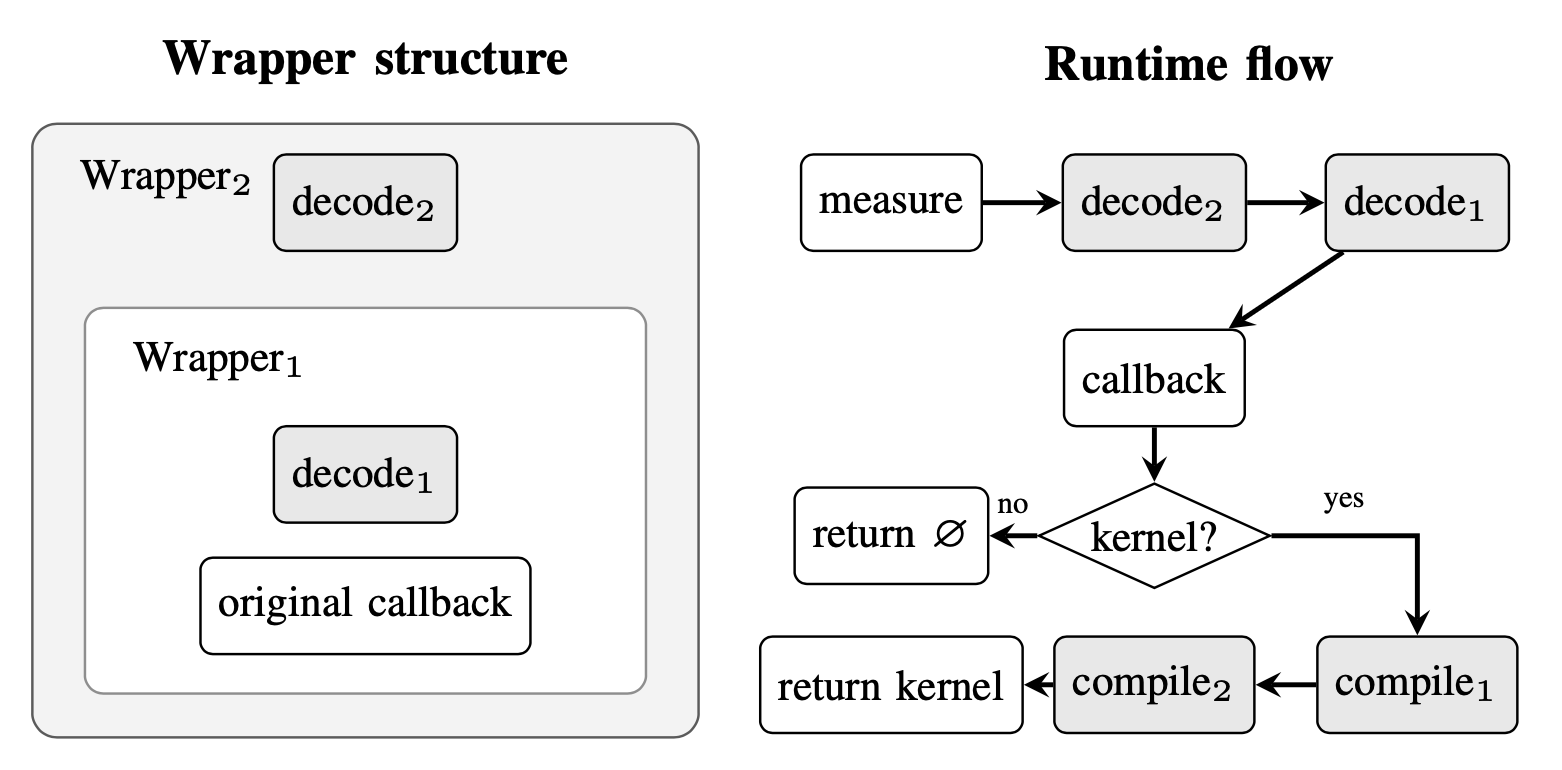

Wrapping composes naturally across passes. Each pass wraps the callbacks produced by the previous pass, creating a chain of adapters. The following diagram shows what this looks like after two passes:

On the left is the wrapping structure: pass 2 wraps pass 1, which wraps the original callback. On the right is the runtime flow when a measurement fires: decoders run outside-in (pass 2’s decoder first, then pass 1’s), restoring the measurement stack at each layer. If the callback returns a new kernel, compilation runs inside-out (pass 1 first, then pass 2). No pass needs to know about any other pass in the chain.

The result is that compilation pipelines are just function composition:

p1, c1 = rep3_bit_compiler.compile(program, callbacks) # + bit-flip QEC

p2, c2 = rep3_phase_compiler.compile(p1, c1) # + phase-flip QEC

p3, c3 = trapped_ion_compiler.compile(p2, c2) # → hardware-native

After these three passes, the program implements the Shor code and targets trapped-ion hardware. The Shor code is a well-known error-correcting code that protects against arbitrary single-qubit errors by encoding each logical qubit into 9 physical qubits — but notably, it is equivalent to composing a bit-flip repetition code with a phase-flip repetition code. Neither code alone corrects arbitrary errors, but together they do. In qstack, that composition falls out automatically from chaining two simple passes; the Shor code is not a special case, just function composition. Hardware targeting is then one more pass on top. The fixup callback is written once and never modified; it is automatically wrapped with the correct decoders at each layer. In qstack, adding a new error-correcting code or a new hardware backend is achieved via a single new compiler pass.

What’s next

One natural direction is formal verification. qstack’s compositional structure is a good fit for this: each compiler pass operates independently, so it can be verified in isolation. A one-time proof that the wrapping mechanism preserves semantics would give end-to-end correctness guarantees for any pipeline built from verified passes — including code concatenation and hardware targeting.

Another direction is Aleph-OS, which tackles a different layer of the problem: the runtime. A fault-tolerant quantum computer does not just execute a compiled program and return a result. It must orchestrate a continuous real-time loop: schedule syndrome extraction, run classical decoders, apply corrections, and feed results back into the quantum processor, all under tight timing constraints. Who coordinates all of that? Aleph-OS explores what a runtime architecture for fault-tolerant quantum systems might look like: how to organize the interaction between the QPU, the decoder, and the classical controller in a way that is reliable and scalable.

At every layer (languages, compilers, runtimes) these turn out to be programming languages problems. We are just getting started. If you want to follow along or get involved, the projects are on GitHub: Aleph, qstack, and Aleph-OS.

Acknowledgments

This work is joint with Dan Grossman, who has been a collaborator and advisor throughout.